Those of us who have spent the last few decades reporting on technology have seen fads and fashions rise and fall on investment bubbles.

In the late 1990s it was dot-com companies, more recently crypto, blockchain, NFTs, driverless cars, the “metaverse.” All have had their day in the sun amid promises they would change the world, or at least banking and finance, the arts, transportation, society at large. To date, those promises are spectacularly unfulfilled.

That brings us to artificial intelligence chatbots.

In from three to eight years we will have a machine with the general intelligence of an average human being…. In a few months, it will be at genius level and a few months after that its powers will be incalculable.

— AI pioneer Marvin Minsky — in 1970

Since last October, when I raised a red flag about hype in the artificial intelligence field, investor enthusiasm has only grown exponentially (as have public fears).

Wall Street and venture investors are pouring billions of dollars into AI startups — Microsoft alone made a $10-billion investment in OpenAI, the firm that produced the ChatGPT bot.

Newsletter

Get the latest from Michael Hiltzik

Commentary on economics and more from a Pulitzer Prize winner.

You may occasionally receive promotional content from the Los Angeles Times.

Companies scratching for capital have learned that they need only claim an AI connection to bring investors to their doors, much as startups typed “dot-com” onto their business plans a couple of decades ago. Nvidia Corp. has acquired a trillion-dollar market value on the strength of a chip it makes that’s deemed crucial for the data-crunching required by AI chatbots.

AI promoters are giddy about their products’ capabilities (and potential profits).

Here’s venture capitalist Marc Andreessen: Among the boons in “our new era of AI,” he wrote recently, “every child will have an AI tutor that is infinitely patient, infinitely compassionate, infinitely knowledgeable, infinitely helpful”; every person “an AI assistant/coach/mentor/trainer/advisor/therapist”; every scientist “an AI assistant/collaborator/partner”; every political leader the same superintelligent aide.

There isn’t much to be said about this prediction, other than that it’s endearing in its childlike naivete, given that in today’s world we still can’t get broadband internet connections, which originated in the 1990s, to millions of Americans. Would it surprise you to know that Andreessen’s venture firm has sunk investments into more than 40 AI-related companies? Me neither.

Andreessen also wrote: “Anything that people do with their natural intelligence today can be done much better with AI.”

That’s provably untrue. Examples of AI confounding its users’ expectations have been piling up on almost a weekly basis.

Among the most famous instances is that of a New York lawyer who filed a brief in a federal court lawsuit citing or quoting dozens of fictitious suits generated by ChatGPT. When the judge ordered the lawyer to verify the citations, he asked ChatGPT if they were for real, which is a bit like asking a young mother if her baby is the most adorable baby ever. The bot said, sure they are, another “hallucination.”

In the end, the lawyer and his associates were fined $5,000 and ordered to write abject letters of apology to the opposing parties and all the judges the bot had falsely identified with the fake cases. He also lost the lawsuit.

Reports of other similar fiascos abound. An eating disorder association replaced the humans staffing its helpline with a chatbot, possibly as a union-busting move — but then had to take the bot offline because, Vice reported, it was encouraging callers to undertake “unhealthy eating habits.”

A Texas professor flunked an entire class because ChatGPT had claimed to be the author of their papers. The university administration exonerated almost all the students after they proved the bot was wrong; someone even submitted the professor’s PhD dissertation to the bot, which claimed to have written that, too.

Claims made for the abilities or perils of AI chatbots have often turned out to be mistaken or chimerical.

A team of MIT researchers purported to discover that ChatGPT could ace the school’s math and computer science curricula with “a perfect solve rate” on the subject tests; their finding was debunked by a group of MIT students. (The original paper has been retracted.) An eye-opening report that an AI program in an Air Force simulation “killed” its human operator to pursue its programmed goals (HAL-style) turned out to be fictitious.

So it’s useful to take a close look at what AI chatbots can and can’t do. We can start with the terminology. For these programs, “artificial intelligence” is a misnomer. They’re not intelligent in anything like the sense that humans and animals are intelligent; they’re just designed to seem intelligent to an outsider unaware of the electronic processes going on inside. Indeed, using the very term distorts our perception of what they’re doing.

That problem was noticed by Joseph Weizenbaum, the designer of the pioneering chatbot ELIZA, which replicated the responses of a psychotherapist so convincingly that even test subjects who knew they were conversing with a machine thought it displayed emotions and empathy.

“What I had not realized,” Weizenbaum wrote in 1976, “is that extremely short exposures to a relatively simple computer program could induce powerful delusional thinking in quite normal people.” Weizenbaum warned that the “reckless anthropomorphization of the computer” — that is, treating it as some sort of thinking companion — had produced a “simpleminded view … of intelligence.”

Even the most advanced computers had no ability to acquire information other than by being “spoon-fed,” Weizenbaum wrote. That’s true of today’s chatbots, which acquire their data by “scraping” text found on the web; it’s just that their capacity to gorge on data is so much greater now, thanks to exponential improvements in computing power, than it was in 1976.

The chatbots attracting so much interest today are what AI researchers call “generative” AI — which uses statistical rules to answer queries by extrapolating from data they’ve previously acquired.

At its heart, says Australian technologist David Gerard, ChatGPT is “a stupendously scaled-up autocomplete,” like a word-processing program predicting the end of a word or sentence you’ve started typing. The program “just spews out word combinations based on vast quantities of training text.”

The professional enthusiasm for these programs — predictions that they’ve moved us a step closer to true artificial intelligence, or that they’re capable of learning, or that they harbor the potential to destroy the human race — may seem unprecedented.

But it’s not. The same cocksure predictions — what Weizenbaum called “grandiose fantasies” — have been part of the AI world since its inception. In 1970, Marvin Minsky of MIT, one of the godfathers of AI, told Time magazine that “In from three to eight years we will have a machine with the general intelligence of an average human being … a machine that will be able to read Shakespeare, grease a car, play office politics, tell a joke, and have a fight. … In a few months, it will be at genius level and a few months after that its powers will be incalculable.”

Minsky and his contemporaries ultimately had to recognize that the programs that seemed to show limitless potential were adept only within narrow confines. That’s still true.

ChatGPT can turn out doggerel poetry or freshman and sophomore essays, pass tests on some technical subjects, write press releases, compile legal filings with a veneer of professionalism.

But these are all generic classes of writing; samples turned out by humans in these categories often have a vacuous, robotic quality. Asked to produce something truly original or creative, chatbots fail or, as those hapless lawyers discovered, fabricate. (When Charlie Brooker, creator of the TV series “Black Mirror,” asked ChatGPT to write an episode, the product was of a quality he described with an unprintable epithet.)

That may give pause to businesses hoping to hire chatbots to cut their human payrolls. When they discover that they may have to employ workers to vet chat output to avoid attracting customer wrath or even lawsuits, they may not be so eager to give bots even routine responsibilities, much less mission-critical assignments.

In turn, that may hint at the destiny of the current investment frenzy. “The optimistic AI spring of the 1960s and early 1970s,” writes Melanie Mitchell of the Santa Fe Institute, gave way to the “AI winter” in which government funding and popular enthusiasm collapsed.

Another boom, this time over “expert systems,” materialized in the early 1980s but had faded by the end of the decade. (“When I received my PhD in 1990, I was advised not to use the term ‘artificial intelligence’ on my job applications,” Mitchell writes.) Every decade seemed to have its boom and bust.

Is AI genuinely threatening? Even the terrifying warnings issued about the perils of the technology and the need for regulation look like promotional campaigns in disguise. Their ulterior motive, writes Ryan Calo of the University of Washington, is to “focus the public’s attention on a far-fetched scenario that doesn’t require much change to their business models” and to convince the public that AI is uniquely powerful (if deployed responsibly).

Interestingly, Sam Altman, CEO of OpenAI, has lobbied the European Union not to “overregulate” his business. EU regulations, as it happens, would focus on near-term issues related to job losses, privacy infringements and copyright violations.

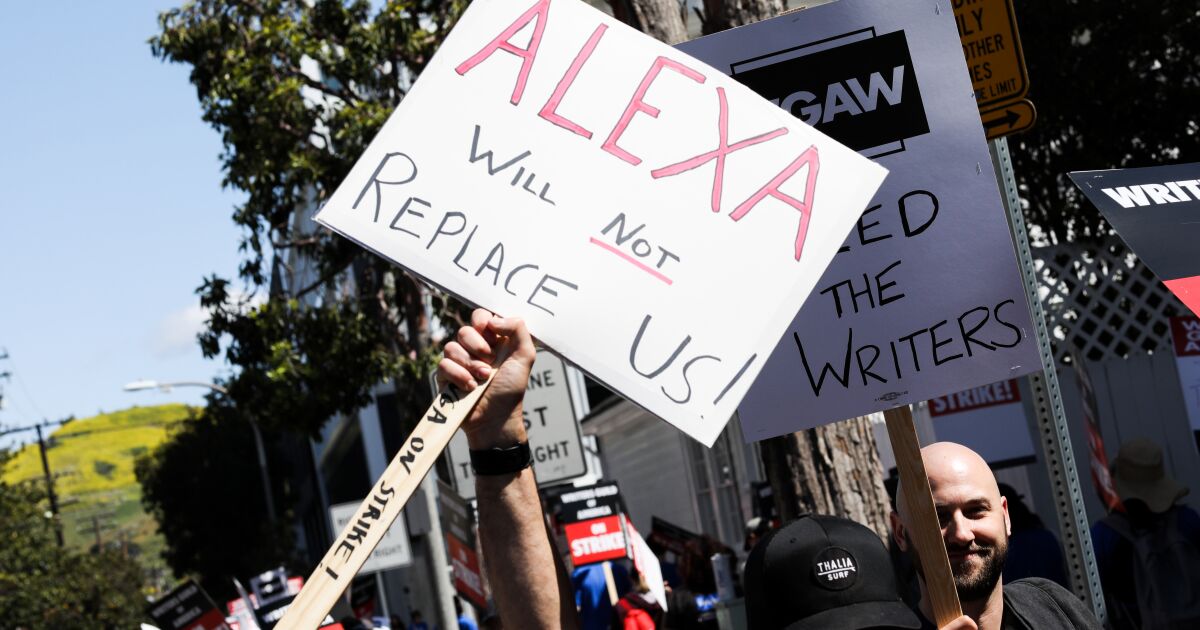

Some of those concerns are motivations of the writers’ and actors’ strikes going on now in Hollywood — the union members are properly concerned that they may lose work to AI bots exploited by cheapskate producers and studio heads who think audiences are too dumb to know the difference between human and robotic creativity.

What’s scarcely acknowledged by today’s AI entrepreneurs, like their predecessors, is how hard it will be to jump from the current class of chatbots to genuine machine intelligence.

The image of human intelligence as the product merely of the hundred trillion neural connections in the human brain — a number unfathomable by the human brain — leads some AI researchers to assume that once their programs reach that scale, their machines will become conscious. It’s the last hard problems in achieving intelligence, much less consciousness, that may be insurmountable: Human researchers haven’t even agreed on a definition of intelligence and have failed to identify the seat of consciousness.

Is there a reasonable role in our lives for chatbots? The answer is yes, if they’re viewed as tools to augment learning, not for cheating; teachers are worried about identifying chat-generated assignments, but in time they’ll use the same techniques they have to identify plagiarism — comparing the product to what they know of their student’s capabilities and rejecting those that look too, too polished (or have identifiable errors).

ChatGPT can help students, writers, lawyers and doctors organize large quantities of information or data to help get ideas straight or produce creative insights. It can be useful in the same way that smart teachers advise students to use other web resources with obvious limitations, such as Wikipedia: They can be the first place one consults in preparing an assignment, but they better not be the last.