Paul Perrone is the Founder/CEO of Perrone Robotics and a pioneer in autonomous vehicles. Expert in AVs, AI and robotics.

By now, you have likely heard whispers about ChatGPT, are already immersed in it, or are somewhere in between. You may have seen examples of human-entered requests and responses from this class of generative pretrained transformer (GPT) AIs.

GPTs seem to be particularly good at generating software code. But what if someone were to ask a GPT AI to create code for an autonomous robot? Consider this exchange.

Request1: Create a maneuver for a robot to navigate to a designated payload cell, hitch to the payload, navigate to a designated drop-off cell and then unhitch the payload.

Response1: Done. Uploaded to robot fleet.

The outcome of the dialogue above is possible today. But consider this additional request.

Request2: If the drop-off cell is a disposal cell and the payload is not a disposable item, then do not initiate the maneuver.

Response2: Done. Uploaded to robot fleet.

This is also possible.

Now consider if, in Request2, the developer accidentally left out the word “not” when referring to the disposable item. Aside from not carrying out the trash, the result could be inadvertent disposal of valuable items. This is also possible.

Right Tool For The Job

Like any tool, with GPT AI, there are some things it is good at doing and some things it is not so good at doing.

Asking a GPT AI questions about politics and the meaning of life may be entertaining but may not be the best use of this new tool. However, GPT AIs are particularly good at generating and debugging code. Very often, the simpler the code, the more accurate it can be in producing results in the first shot.

As complexity of requests increases, employing an iterative process to provide requests that improve the code, fix errors and clarify desired outcomes becomes essential. As a collaborator in development, GPT AI can turn a coding task that would ordinarily take weeks or months to complete into a task that takes hours or days.

In the field of robotics, we think of a robot as following a “sense-plan-act” process. That is, the robot first senses something from the environment using sensors such as cameras. Then, the robot uses this information, along with stored knowledge, to decide what maneuvers and actions to undertake. And then, the robot takes action by actuating motors to drive wheels, control an arm or trigger some physical event.

Robots have computers, sensors and actuators to implement this process. They sense the physical world and directly produce an effect in the physical world. By integrating GPT AI with robotics, code creation requests that we make of the GPT AI likewise can lead to the production of an effect in the physical world.

The alluring benefits of such an integration are the rapid generation of many new capabilities. With GPT AI, robot behaviors that previously took months and years to create now can take days and weeks. A 10-fold and 100-fold increase in capabilities is possible now and accelerating.

Getting It Wrong Or Right

The impact of connecting GPT AI with the physical world via robotics depends on what’s under control. If we’re controlling a vacuum cleaning robot, the most serious effects may be limited to annoying a household pet. If it’s an entertainment robot, it may provide a disappointing experience.

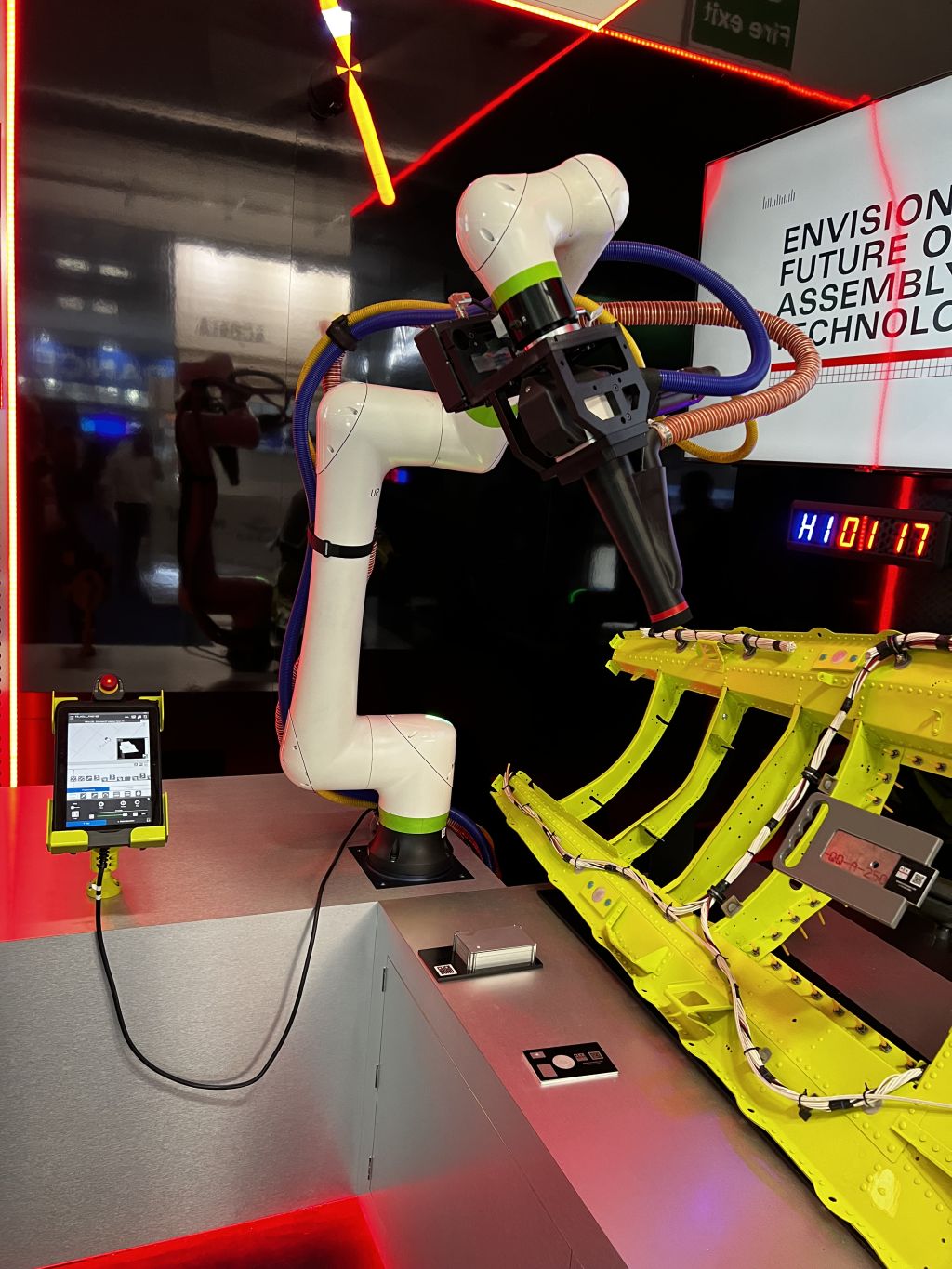

However, if we’re talking about larger robotic platforms like manufacturing arms, large service robots or an autonomous car, then the size, weight, speed and resultant energy packed by such robots can cause serious damage to people and environments. If the robots are carrying payloads that pack even more energy, such as weapons or explosive materials, the effects can not only be lethal at scale but pose existential risks to human existence.

As with any new technology, it will inevitably be, by accident or with purposeful intent, applied in a way that can adversely affect life. Consortiums and governments can attempt to intervene. But it is impossible to dictate what goes on behind closed doors across 195-plus sovereign nations and eight billion people.

Much as there was “computerphobia” in the 1980s as personal computing blossomed and broader technology phobia in the 1990s as the internet took off, we’re inevitably going to experience new forms of AI and robotics phobia now. However, we’ve also seen the immense real benefits that have come from technological advances throughout history.

Keeping It In Check

With GPT AI’s fusion with robotics, we’ll see more capabilities for automated interfaces with the physical world. This means better and more useful home and entertainment robots, automated industrial applications, service robotics, automated driving and more.

Nevertheless, we will also see new accidents and purposeful adverse impacts in this world. This is where creating checks to watch an automated system comes into play. Sometimes referred to as “watchdog checkers,” these independent systems can monitor and detect hazardous and malicious events before they lead to accidents or attacks. They can trigger fail-safe conditions, which prevent the adverse consequences from occurring.

And here, too, GPT AI can be put to good work. The creation of watchdog checker rules can be facilitated by use of GPT AI. An increasingly comprehensive and complete set of rules can be specified and uploaded to independent fail-safe watchdog checkers. And thus, a 10-fold, 100-fold and greater response of countermeasures can be undertaken to keep the robots in check. Be they watchdog checkers that keep friendly robots or adversarial robots in control.

This is the duality of creating robots. We create the thing. And then, we create the thing that checks the thing. This will be particularly important for creating robots that are fused with GPT AI. For example, consider this exchange.

Request3: Create a fail-safe watchdog check rule which stops the robot before it drops off nondisposable items at disposal cells.

Response3: Done. Uploaded to robot fleet.

This is also possible. This is critical.

Forbes Technology Council is an invitation-only community for world-class CIOs, CTOs and technology executives. Do I qualify?